A key capability of a developer platform is to enable developers to expose services to the Internet as seamlessly as possible. We implement this capability with a developer platform feature called platform ingress. Without this capability, every engineering team is burdened with:

- Load Balancing Architecture (at the time of writing GCP has 9 options for this)

- DNS

- TLS provisioning and rotation

- CDNs

- WAF

- Observability: access logs, metrics, HTTP-based SLOs

Platform engineers can offer this capability, enabling developers, while also meeting security stakeholders’ needs by making it conform to the company’s security and encryption standards.

However, due to the number of options available, this is a daunting task for a platform engineer. This article describes a tried and tested solution that is part of CECG’s developer platform, as well as in use in many of our clients’ bespoke solutions.

What is Ingress?

Ingress deals with how an HTTP request gets from your customer’s web browser or mobile application to the services that your engineers develop. For example, your developers develop HTTP-based microservices in Java and Spring boot; these will open a TCP port to accept HTTP requests. But how does the traffic get there? A typical setup will include:

Edge (Global) -> Regional LB -> App

In the context of Kubernetes, it is likely to be:

Edge (Global) -> Cloud LB -> Ingress Controller -> Pod (container)

Each of these layers has a myriad of options in terms of architectural and technology choices e.g. which external LB to use? At what point is TLS terminated? Which TLS certs are at what layer?

For this article, we are only considering HTTP ingress. UDP and raw TCP Ingress are also features of developer platforms but are not discussed here.

What are the considerations for Ingress in a GKE-based Developer Platform?

If we were implementing platform ingress from scratch, we need to consider the following:

- What is the developer interface? How do developers configure Ingress for their applications?

- DNS: How are records created?

- TLS requirement: How are certificates created and rotated?

- Web Application Firewalls (WAFs)

- Content Delivery Networks (CDNs)

- Traffic segregation between tenants

- Monitoring and alerting

- HTTP based SLOs

Developer Interface: Gateway API vs Ingress API

Every platform capability should first concern itself with: What is the developer interface? Is enough autonomy given to the developer? For the Kubernetes-based developer platform, the Interface for developers can be:

- Ingress API

- Gateway API, specifically HTTPRoute

- Custom e.g. a CRD

At the time of writing, we consider the Ingress API to be the most stable API to expose as a Developer interface. However, our solution includes the use of the Gateway API for external load balancing.

Internal vs External Ingress Controllers

GKE comes with two built-in capabilities, the GKE Ingress Controller and the GKE Gateway Controller. Both of these create Cloud Load Balancers based on Kubernetes Ingress and HTTPRoute resources. We call these external ingress controllers, as they don’t run anything inside the cluster, but instead configure external Cloud Load Balancers.

Internal Ingress controllers run inside the cluster, examples being traefik & nginx, which re-configure themselves based on Ingress and/or Gateway resources.

We use both in our solutions, as shown below, with the reasons for not only using an external ingress controller being:

- Developer responsiveness, an ingress solution that creates a new LB, certificate, and external IP for every developer application will be slow whereas internal ingress controllers fronted by an external ingress controller can be configured nearly instantly

- Our platforms are built to scale to 1000s of applications, having 1000s of external LBs/IPs/Certs typically doesn’t scale

- External ingress controllers typically have less HTTP routing capability than internal ones such as Traefik

- Internal ingress controllers allow us to build far more out-of-the-box observability for developers

What’s our go-to architecture?

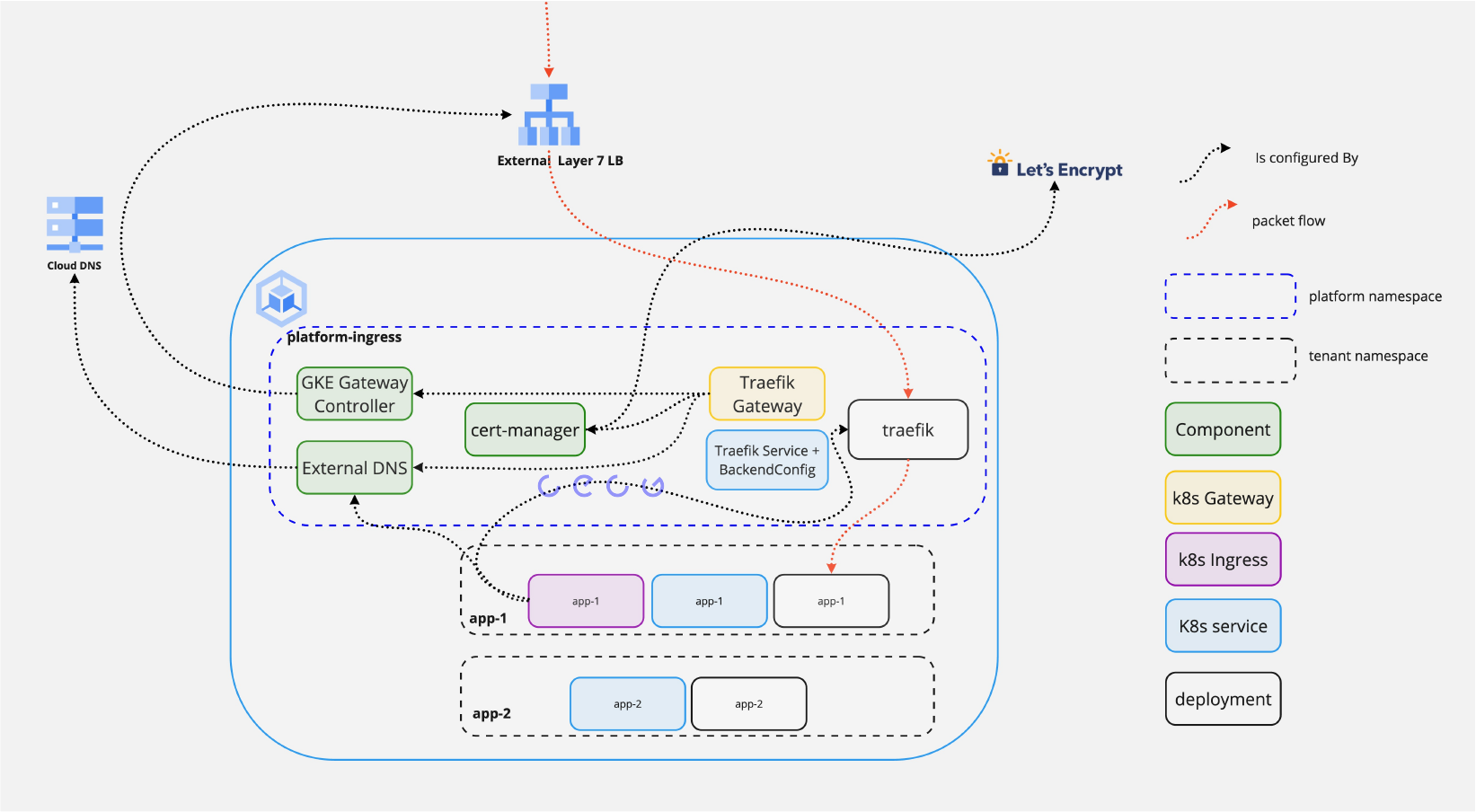

Designing an Ingress architecture for a Developer Platform can be a multi-month process for a company starting from scratch, evaluating Load Balancers, Ingress Controllers, and Certificate Management solutions. We have a set of reference architectures, with the following being the one built into our off-the-shelf developer platform.

- A CECG developer platform is configured with a set of

ingress_domainsthat it has permission to expose services on. - The developer interface is standard Kubernetes Ingress and they are allowed to create Ingress resources under those ingress_domains. A policy can be added to further restrict this e.g. tenant 1 can only use ingress_domain x

From just the creation of the Ingress resource, the application will be exposed to the Internet in seconds via TLS with no further configuration.

To achieve this we use the following components where the diagram doesn’t specify how these are deployed:

- Global External L7 Load Balancer

- GKE Gateway Controller to create that load balancer

- Cert Manager to manage the lifecycle certificates for that load balancer

- Letsencrypt to issue the certificates dynamically

- External DNS to dynamically create the DNS records for all the tenant applications

Why Ingress API as the tenant interface rather than the Gateway API?

- More stable / known by the majority of developers, we can migrate seamlessly in the future

- The policy can be used to stop users misconfiguring the ingress class or host

Why a Global External L7?

- To build in features such as CDN, and WAF and to enable multi-region deployments

Why configure Global External L7 with Gateway API rather than more statically?

- To dynamically create certificates

- To support segregated Ingress (see below) with no manual actions

- The GKE Ingress controller does not support

Why Cert Manager?

- To enable ACME/Lets Encrypt as a default solution for certs with no manual actions for new environments

- To support other TLS issuers for those customers not able to use Lets Encrypt

Why External DNS?

- Developer experience! Dynamically create records for their services as they create Ingress resources

Why is Traefik the Internal Ingress controller?

- There are many good options here. We have the most production experience with Traefik. Contour was also considered and is in use by our clients.

Segregated Ingress

As multi-tenancy adoption increases, the need may come that tenants of different classes e.g. two different business areas, may need to be further isolated. The above solution can be deployed multiple times in the same cluster with different ingress classes so tenants don’t share the same Loadbalancer and Ingress controller instances. We use this feature to enable:

- Ingress of different types, e.g. external vs internal within the organisation

- Further segregation of observability e.g. access logs

- Scaling ingress controllers

- Canarying ingress changes to a subset of tenants

Summary

A platform engineer’s mission is to speed up software delivery. A key performance indicator, often a blocker at many organisations we work with, is: how long it takes to expose a new service to the Internet. The platform ingress feature, when implemented correctly, can take this mission from weeks to seconds, allowing your engineers to focus on product delivery rather than managing Cloud Infrastructure.